celebrate the holidays in a new hyundai palisade...

February 24, 2026

5:58 am

explore the 2025 jeep compas: adventure awaits!...

February 24, 2026

6:01 am

Anthropic vs. Chinese AI: Anthropic Accuses DeepSeek of Massive AI Data Theft Ahead of R2 Launch

February 24, 2026

06:12

The brewing “Cold War” between US and Chinese AI giants just hit a boiling point. On Tuesday, Anthropic, the San Francisco-based creator of Claude AI, officially accused three major Chinese AI labs, DeepSeek, MiniMax, and Moonshot AI, of “industrial-scale” data theft.

The allegations suggest a sophisticated campaign to “siphon” the intelligence of Claude to bolster Chinese models, just as DeepSeek prepares to launch its highly anticipated R2 model.

In a detailed blog post, Anthropic alleged that these companies bypassed safety protocols to “cheat” their way to better performance. The company provided concrete divs to back its claims:

Recent Posts

explore surprisingly affordable luxury ram 1500...

February 24, 2026

5:50 am

need a new car? rent to own cars no credit check ...

February 24, 2026

6:03 am

want an suv with easy access and comfort for seniors? here’s how to get it!...

February 24, 2026

6:01 am

drive into the future with the 2025 subaru forester...

February 24, 2026

5:48 am

Anthropic refers to this process as distillation, training a smaller or newer model on the outputs of a superior one. While distillation is a common internal practice for companies like OpenAI and Anthropic to optimize their own models, Anthropic argues that doing so without permission is “illicit” and strips away vital safety guardrails.

“Models built through illicit distillation are unlikely to retain those safeguards, meaning that dangerous capabilities can proliferate with many protections stripped out entirely,” Anthropic stated.

The “Hypocrisy” Debate: Internet Reacts with Memes and Malice

Despite the gravity of the accusations, the court of public opinion isn’t exactly siding with the US giant. Social media platforms like X (formerly Twitter) were quickly flooded with accusations of “gaslighting.”

Recent Posts

2025 Jeep Wrangler Price One Might Not Want to Miss!

2025 Jeep Wrangler Price One Might Not Want to Miss!2025 jeep wrangler price one might not want to miss!...

February 24, 2026

5:58 am

Celebrate the Holidays in a New Hyundai Palisade

Celebrate the Holidays in a New Hyundai Palisadecelebrate the holidays in a new hyundai palisade...

February 24, 2026

6:03 am

Explore The 2025 Jeep Compas: Adventure Awaits!

Explore The 2025 Jeep Compas: Adventure Awaits!explore the 2025 jeep compas: adventure awaits!...

February 24, 2026

6:07 am

Explore Surprisingly Affordable Luxury RAM 1500

Explore Surprisingly Affordable Luxury RAM 1500explore surprisingly affordable luxury ram 1500...

February 24, 2026

6:10 am

Critics pointed to Anthropic’s own recent legal hurdles, including a $1.5 billion settlement involving authors who accused the company of using pirated books from torrent sites to train Claude.

Key voices from the internet:

- The Content Creator: “I spent years building a website… Claude parrotted it almost word-for-word. Spare us the gaslighting,” wrote one user.

- The Privacy Advocate: Others noted that the Chinese companies actually paid for API access, unlike the raw scraping methods often used by US companies on public websites.

- Elon Musk: The xAI boss didn’t hold back, calling Anthropic “smug” and “hypocritical,” adding, “Anthropic is guilty of stealing training data at massive scale… This is just a fact.”

Geopolitical Stakes: Chips, Exports, and the R2 Looming

This isn’t just a corporate spat; it’s a matter of national security. The timing of Anthropic’s attack coincides with two major events:

- Export Control Scandals: Reports recently surfaced via Reuters suggesting DeepSeek may have used advanced Nvidia Blackwell chips—currently restricted by US export laws—at a data center in Inner Mongolia.

- The DeepSeek R2 Launch: DeepSeek, which shocked the industry with its R1 model, is rumored to release DeepSeek R2 within the week. Early whispers suggest R2 could match or even exceed the capabilities of Claude 3.5 or GPT-4o.

| Company | Model | Status | Allegation |

| Anthropic | Claude 3.5 | Proprietary | Accuser (Data Theft) |

| DeepSeek | R1 / R2 | Open-Source | Accused (Distillation) |

| Moonshot AI | Kimi | Private/API | Accused (Distillation) |

| MiniMax | Abab | Private/API | Accused (Distillation) |

The core of the tension lies in the open-source vs. proprietary battle. Chinese models like DeepSeek are often open-source, allowing global developers to use their “weights” for free or at a low cost.

If Chinese models achieve parity with American models by using American data, the commercial “moat” around companies like Anthropic and OpenAI could evaporate. For the US, this isn’t just about “cheating”; it’s about losing the economic and strategic lead in the most important technology of the century.

Recent Posts

As negotiations around the Iran conflict gather momentum, a new layer of intrigue has emerged: Tehran’s reported preference for U.S. Vice President JD Vance over other key divs in Donald Trump’s inner circle. The choice...

March 25, 2026

11:50 am

need a new car? rent to own cars no credit check ...

March 25, 2026

11:39 am

A decades-old newspaper ad by Donald Trump is back in circulation, and it’s raising uncomfortable, timely questions. In 1987, Trump spent nearly $100,000 on full-page ads criticizing U.S. foreign policy in the Persian Gulf. Today,...

March 25, 2026

11:48 am

want an suv with easy access and comfort for seniors? here’s how to get it!...

March 25, 2026

11:31 am

A dramatic security breach at the Chinese Embassy in Tokyo has triggered a fresh diplomatic tensions between Japan and China. Japanese authorities have called the incident “regrettable,” while Beijing has responded with sharp criticism, warning...

March 25, 2026

11:39 am

drive into the future with the 2025 subaru forester...

March 25, 2026

11:37 am

With fewer than 100 days to go before the FIFA World Cup 2026 kicks off, a seemingly alarming development has surfaced in one of the host cities: Philadelphia. FIFA has canceled roughly 2,000 hotel room...

March 25, 2026

11:31 am

2025 jeep wrangler price one might not want to miss!...

March 25, 2026

11:10 am

Within days of renewed U.S.-Iran tensions, a striking detail has emerged: Saudi Crown Prince Mohammed bin Salman has reportedly encouraged President Donald Trump to press forward with military operations against Iran. The reported private push,...

March 25, 2026

11:29 am

celebrate the holidays in a new hyundai palisade...

March 25, 2026

11:06 am

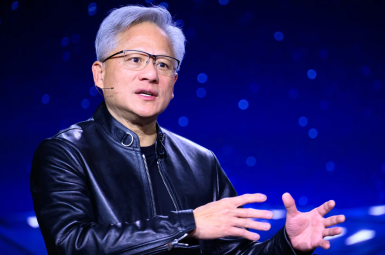

Has artificial general intelligence (AGI) already arrived? According to Jensen Huang, the answer is yes—or at least, closer than most experts are willing to admit. Speaking on the Lex Fridman Podcast, the NVIDIA CEO made...

March 25, 2026

5:52 am

explore the 2025 jeep compas: adventure awaits!...

March 25, 2026

5:41 am